In statistical physics, entropy is a measure of the disorder of a system. What disorder refers to is the number of microscopic configurations, W, that a thermodynamic system can have when in a state as specified by certain macroscopic variables (volume, energy, pressure, and temperature). By “microscopic states”, we mean the exact states of all the molecules making up the system.

Mathematically, the exact definition is:

Entropy = (Boltzmann’s constant k) x logarithm of the number of possible states

S = kB logW

This equation, which relates the microscopic details, or microstates, of the system (via W) to its macroscopic state (via the entropy S), is the key idea of statistical mechanics. In a closed system, entropy never decreases, so in the Universe, entropy is irreversibly increasing. In an open system (for example, a growing tree), entropy can decrease, and the order can increase, but only at the expense of increasing entropy somewhere else (e.g.,, in the Sun).

Order is decreasing.

Entropy is increasing.

Units of Entropy

The SI unit for entropy is J/K. According to Clausius, the entropy was defined via the change in entropy S of a system. The change in entropy S, when an amount of heat Q is added to it by a reversible process at a constant temperature, is given by:

Here Q is the energy transferred as heat to or from the system during the process, and T is the system’s temperature in kelvins during the process. If we assume a reversible isothermal process, the total entropy change is given by:

∆S = S2 – S1 = Q/T

In this equation, the quotient Q/T is related to the increase in disorder. Higher temperature means greater randomness of motion. At lower temperatures adding heat, Q causes a substantial fractional increase in molecular motion and randomness. On the other hand, if the substance is already hot, the same quantity of heat Q adds relatively little to the greater molecular motion.

Example: Entropy change in melting ice

Calculate the change in entropy of 1 kg of ice at 0°C, when melted reversibly to water at 0°C.

Since it is an isothermal process, we can use:

∆S = S2 – S1 = Q/T

therefore the entropy change will be:

∆S = 334 [kJ] / 273.15 [K] = 1.22 [kJ/K]

where 334 kilojoules of heat are required to melt 1 kg of ice (latent heat of fusion = 334 kJ/kg) and this heat is transferred to the system at 0°C (273.15 K).

Specific Entropy

The entropy can be made into an intensive or specific variable by dividing by the mass. Engineers use the specific entropy in thermodynamic analysis more than the entropy itself. The specific entropy (s) of a substance is its entropy per unit mass. It equals the total entropy (S) divided by the total mass (m).

s = S/m

where:

s = specific entropy (J/kg)

S = entropy (J)

m = mass (kg)

Entropy quantifies the energy of a substance that is no longer available to perform useful work. Because entropy tells so much about the usefulness of an amount of heat transferred in performing work, the steam tables include values of specific entropy (s = S/m) as part of the information tabulated.

In general, specific entropy is a property of a substance, like pressure, temperature, and volume, but it cannot be measured directly. Normally, the entropy of a substance is given for some reference value. For example, the specific entropy of water or steam is given using the reference that the specific entropy of water is zero at 0.01°C and normal atmospheric pressure, where s = 0.00 kJ/kg. The absolute value of specific entropy is unknown is not a problem, however, because it is the change in specific entropy (∆s) and not the absolute value that is important in practical problems.

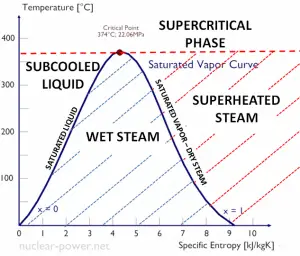

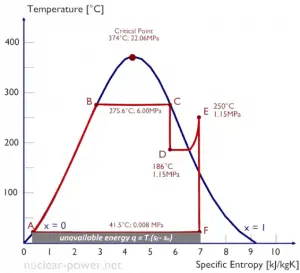

Temperature-entropy Diagrams – T-s Diagrams

The phases of a substance and the relationships between its properties are most commonly shown on property diagrams. A large number of different properties have been defined, and there are some dependencies between properties.

A Temperature-entropy diagram (T-s diagram) is the type of diagram most frequently used to analyze energy transfer system cycles. It is used in thermodynamics to visualize changes to temperature and specific entropy during a thermodynamic process or cycle.

The work done by or on the system and the heat added to or removed from the system can be visualized on the T-s diagram. By the definition of entropy, the heat transferred to or from a system equals the area under the T-s curve of the process.

dQ = TdS

An isentropic process is depicted as a vertical line on a T-s diagram, whereas an isothermal process is horizontal. In an idealized state, compression is a pump, compression in a compressor, and expansion in a turbine is isentropic. Therefore it is very useful in power engineering because these devices are used in thermodynamic cycles of power plants.

Note that, the isentropic assumptions are only applicable with ideal cycles. Real thermodynamic cycles have inherent energy losses due to inefficiency of compressors and turbines.

Irreversibility of Natural Processes

According to the second law of thermodynamics:

The entropy of any isolated system never decreases. In a natural thermodynamic process, the sum of the entropies of the interacting thermodynamic systems increases.

This law indicates the irreversibility of natural processes. Reversible processes are a useful and convenient theoretical fiction but do not occur in nature. From this law, it is impossible to construct a device that operates on a cycle and whose sole effect is the transfer of heat from a cooler body to a hotter body. It follows perpetual motion machines of the second kind are impossible.

Entropy at Absolute Zero

According to the third law of thermodynamics:

The entropy of a system approaches a constant value as the temperature approaches absolute zero.

Based on empirical evidence, this law states that the entropy of a pure crystalline substance is zero at the absolute zero of temperature, 0 K and that it is impossible using any process, no matter how idealized, to reduce the temperature of a system to absolute zero in a finite number of steps. This allows us to define a zero point for the thermal energy of a body.

Absolute zero is the coldest theoretical temperature, at which the thermal motion of atoms and molecules reaches its minimum. This is a state at which the enthalpy and entropy of a cooled ideal gas reach its minimum value, taken as 0. Classically, this would be a state of motionlessness, but quantum uncertainty dictates that the particles still possess finite zero-point energy. Absolute zero is denoted as 0 K on the Kelvin scale, −273.15 °C on the Celsius scale, and −459.67 °F on the Fahrenheit scale.